Difference between revisions of "Docker"

(→Introduction) |

(→Introduction) |

||

| Line 882: | Line 882: | ||

https://docs.docker.com/engine/swarm/swarm-tutorial/deploy-service/ | https://docs.docker.com/engine/swarm/swarm-tutorial/deploy-service/ | ||

| + | |||

| + | |||

| + | ==Create swarm== | ||

| + | |||

| + | <pre> | ||

| + | docker@manager:~$ docker swarm init --advertise-addr 192.168.42.118 | ||

| + | Swarm initialized: current node (uzhpeg3ug0ip6f9o3l51c749y) is now a manager. | ||

| + | |||

| + | To add a worker to this swarm, run the following command: | ||

| + | |||

| + | docker swarm join --token SWMTKN-1-21qaw0xpv9tj2kjxbmj6jn2jqpbcjjkyeze2nno9yy0wvt0vp9-9k2yyit1x8a11ca50ejxalzhz 192.168.42.118:2377 | ||

| + | |||

| + | To add a manager to this swarm, run 'docker swarm join-token manager' and follow the instructions. | ||

| + | </pre> | ||

=Kubernetes= | =Kubernetes= | ||

Revision as of 20:15, 26 June 2018

Contents

Manage Virtual Hosts with docker-machine

Introduction

Docker Machine is a tool that lets you install Docker Engine on virtual hosts, and manage the hosts with docker-machine commands. You can use Machine to create Docker hosts on your local Mac or Windows box, on your company network, in your data center, or on cloud providers like Azure, AWS, or Digital Ocean.

Using docker-machine commands, you can start, inspect, stop, and restart a managed host, upgrade the Docker client and daemon, and configure a Docker client to talk to your host.

When people say “Docker” they typically mean Docker Engine, the client-server application made up of the Docker daemon, a REST API that specifies interfaces for interacting with the daemon, and a command line interface (CLI) client that talks to the daemon (through the REST API wrapper). Docker Engine accepts docker commands from the CLI, such as docker run <image>, docker ps to list running containers, docker image ls to list images, and so on.

Docker Machine is a tool for provisioning and managing your Dockerized hosts (hosts with Docker Engine on them). Typically, you install Docker Machine on your local system. Docker Machine has its own command line client docker-machine and the Docker Engine client, docker. You can use Machine to install Docker Engine on one or more virtual systems. These virtual systems can be local (as when you use Machine to install and run Docker Engine in VirtualBox on Mac or Windows) or remote (as when you use Machine to provision Dockerized hosts on cloud providers). The Dockerized hosts themselves can be thought of, and are sometimes referred to as, managed “machines”.

Source: https://docs.docker.com/machine/overview/

Hypervisor drivers

What is a driver

Machine can be created with the docker-machine create command. Most simple usage:

docker-machine create -d <hybervisor driver name> <driver options> <machine name>

The default value of the driver parameter is "virtualbox".

docker-machine can create and manage virtual hosts on the local machine and on remote clouds. Always the chosen driver determines where and how the virtual machine will be created. The guest operating system that is being installed on the new machine is also determined by the hypervisor driver. E.g. with the "virtualbox" driver you can create machines locally using the boot2docker as the guest OS.

The driver also determines the virtual network types and interfaces types that are created inside the virtual machine. E.g. the KVM driver creates two virtual networks (bridges), one host-global and one host-private network.

In the docker-machine create command, the available driver options are also determined by the driver. You always has to check the available options at the vandor of driver. For cloud drivers typical options are the remote url, the login name and the password. Some driver allows to change the guest OS, the CPU number or the default memory.

Here is a complete list of the currently available drivers: https://github.com/docker/docker.github.io/blob/master/machine/AVAILABLE_DRIVER_PLUGINS.md

KVM driver

KVM driver home page: https://github.com/dhiltgen/docker-machine-kvm

Minimum Parameters:

- --driver kvm

- --kvm-network: The name of the kvm virtual (public) network that we would like to use. If this is not set, the new machine will be connected to the "default" KVM virtual network.

Images:

By default docker-machine-kvm uses a boot2docker.iso as guest os for the kvm hypervisior. It's also possible to use every guest os image that is derived from boot2docker.iso as well. For using another image use the --kvm-boot2docker-url parameter.

Dual Network:

- eth1 - A host private network called docker-machines is automatically created to ensure we always have connectivity to the VMs. The docker-machine ip command will always return this IP address which is only accessible from your local system.

- eth0 - You can specify any libvirt named network. If you don't specify one, the "default" named network will be used.

If you have exotic networking topolgies (openvswitch, etc.), you can use virsh edit mymachinename after creation, modify the first network definition by hand, then reboot the VM for the changes to take effect. Typically this would be your "public" network accessible from external systems To retrieve the IP address of this network, you can run a command like the following: docker-machine ssh mymachinename "ip -one -4 addr show dev eth0|cut -f7 -d' '"

Driver Parameters:

- --kvm-cpu-count Sets the used CPU Cores for the KVM Machine. Defaults to 1 .

- --kvm-disk-size Sets the kvm machine Disk size in MB. Defaults to 20000 .

- --kvm-memory Sets the Memory of the kvm machine in MB. Defaults to 1024.

- --kvm-network Sets the Network of the kvm machinee which it should connect to. Defaults to default.

- --kvm-boot2docker-url Sets the url from which host the image is loaded. By default it's not set.

- --kvm-cache-mode Sets the caching mode of the kvm machine. Defaults to default.

- --kvm-io-mode-url Sets the disk io mode of the kvm machine. Defaults to threads.

Install softwares

First we have to install the docker-machine app itself:

base=https://github.com/docker/machine/releases/download/v0.14.0 && curl -L $base/docker-machine-$(uname -s)-$(uname -m) >/tmp/docker-machine && sudo install /tmp/docker-machine /usr/local/bin/docker-machine

Secondly we have to install the hypervisor driver for the docker-machine to be able to create, manage Virtual Machines running on the hypervisor. As we are going to use the KVM hypervisor, we have to install the "docker-machine-driver-kvm" driver:

# curl -Lo docker-machine-driver-kvm \ https://github.com/dhiltgen/docker-machine-kvm/releases/download/v0.7.0/docker-machine-driver-kvm \ && chmod +x docker-machine-driver-kvm \ && sudo mv docker-machine-driver-kvm /usr/local/bin

We suppose that KVM and the libvirt is already installed on the system.

Tip

If you want to use VirtualBox as your hypervisor, no extra steps are needed, as its docker-machine driver is included in the docker-machine app

Available 3rd party drivers:

https://github.com/docker/docker.github.io/blob/master/machine/AVAILABLE_DRIVER_PLUGINS.md

Create machines with KVM

Create the machine

Before a new machine can be created with the docker-machine command, the proper KVM virtual network must be created.

See How to create KVM networks for details.

# docker-machine create -d kvm --kvm-network "docker-network" manager Running pre-create checks... Creating machine... (manager) Copying /root/.docker/machine/cache/boot2docker.iso to /root/.docker/machine/machines/manager/boot2docker.iso... Waiting for machine to be running, this may take a few minutes... Detecting operating system of created instance... Waiting for SSH to be available... Detecting the provisioner... Provisioning with boot2docker... Copying certs to the local machine directory... Copying certs to the remote machine... Setting Docker configuration on the remote daemon... Checking connection to Docker... Docker is up and running! To see how to connect your Docker Client to the Docker Engine running on this virtual machine, run: docker-machine env manager

Tip

The machine is created under /USER_HOME/.docker/machine/machines/<machine_name> directory

If the new VM was created with virtualbox driver, the VirtualBox graphical management interface must be started with the same user, that the VM was created with, and the VirtualBox will discover the new VM automatically

Check what was created

Interfaces on the host

# ifconfig

eno1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.0.105 netmask 255.255.255.0 broadcast 192.168.0.255

....

virbr1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.42.1 netmask 255.255.255.0 broadcast 192.168.42.255

...

virbrDocker: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.123.1 netmask 255.255.255.0 broadcast 192.168.123.255

inet6 2001:db8:ca2:2::1 prefixlen 64 scopeid 0x0<global>

...

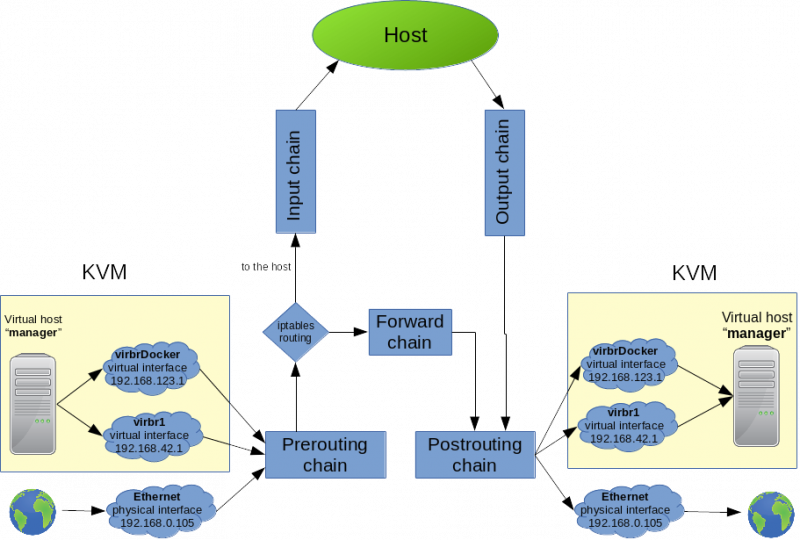

On the host, upon the regular interfaces, we can see the two bridges for the two virtual networks:

- virbrDocker: That is the virtual network that we created in libvirt. This is connected to the host network with NAT. We assigned these IP addresses, when we defined the network.

- virbr1: That is the host-only virtual network that was created out-of-the-box. This one has no internet access.

Interface the new VM

You can log in to the newly created VM with the docker-machine ssh <machine_name> command On the newly created docker ready VM, four interfaces were created.

# docker-machine ssh manager

## .

## ## ## ==

## ## ## ## ## ===

/"""""""""""""""""\___/ ===

~~~ {~~ ~~~~ ~~~ ~~~~ ~~~ ~ / ===- ~~~

\______ o __/

\ \ __/

\____\_______/

_ _ ____ _ _

| |__ ___ ___ | |_|___ \ __| | ___ ___| | _____ _ __

| '_ \ / _ \ / _ \| __| __) / _` |/ _ \ / __| |/ / _ \ '__|

| |_) | (_) | (_) | |_ / __/ (_| | (_) | (__| < __/ |

|_.__/ \___/ \___/ \__|_____\__,_|\___/ \___|_|\_\___|_|

Boot2Docker version 18.05.0-ce, build HEAD : b5d6989 - Thu May 10 16:35:28 UTC 2018

Docker version 18.05.0-ce, build f150324

Check the interfaces of the new VM:

docker@manager:~$ ifconfig

docker0 inet addr:172.17.0.1 Bcast:172.17.255.255 Mask:255.255.0.0

...

eth0 inet addr:192.168.123.195 Bcast:192.168.123.255 Mask:255.255.255.0

...

eth1 inet addr:192.168.42.118 Bcast:192.168.42.255 Mask:255.255.255.0

- eth0:192.168.123.195 - Interface to the new virtual network (docker-network) created by us. this network is connected to the host network,so it has public internet access as well.

- eth1:192.168.42.118 - This connect to the dynamically created host-only virtual network. Just for VM-to-VM communication

- docker0:172.17.0.1 - This VM is ment to host docker container, so the docker daemon was already installed and started on it. Form docker point of view, this VM is also a (docker) host, and therefore the docker daemon created the default virtual bridge, that the containers will be connected to unless it is specified implicitly otherwise during container creation.

Inspect the new VM with the docker-machine inspect command

# docker-machine inspect manager

{

"ConfigVersion": 3,

"Driver": {

....

"CPU": 1,

"Network": "docker-network",

"PrivateNetwork": "docker-machines",

"ISO": "/root/.docker/machine/machines/manager/boot2docker.iso",

"...

},

"DriverName": "kvm",

"HostOptions": {

....

},

"SwarmOptions": {

"IsSwarm": false,

...

},

"AuthOptions": {

....

}

},

"Name": "manager"

}

Routing table

All the packages that ment to go to the docker VMs are routed to the bridges # route Kernel IP routing table Destination Gateway Genmask Flags Metric Ref Use Iface ... 192.168.42.0 0.0.0.0 255.255.255.0 U 0 0 0 virbr1 <<<<this 192.168.123.0 0.0.0.0 255.255.255.0 U 0 0 0 virbrDocker <<<this

IPtables modifications

Switches:

- -o, --out-interface name

- -i, --input-interface name

- -s, source IP address

- -d, destination IP address

- -p, Sets the IP protocol for the rule

- -j, jump to the given target/chain

DNS and DCHP packages from the Virtual Bridges are allowed to be sent to the host machine.

-A INPUT -i virbr1 -p udp -m udp --dport 53 -j ACCEPT -A INPUT -i virbr1 -p tcp -m tcp --dport 53 -j ACCEPT -A INPUT -i virbr1 -p udp -m udp --dport 67 -j ACCEPT -A INPUT -i virbr1 -p tcp -m tcp --dport 67 -j ACCEPT -A INPUT -i virbrDocker -p udp -m udp --dport 53 -j ACCEPT -A INPUT -i virbrDocker -p tcp -m tcp --dport 53 -j ACCEPT -A INPUT -i virbrDocker -p udp -m udp --dport 67 -j ACCEPT -A INPUT -i virbrDocker -p tcp -m tcp --dport 67 -j ACCEPT

The host machine is allowed to send DHCP packages to the virtual bridges in order to configure them.

-A OUTPUT -o virbr1 -p udp -m udp --dport 68 -j ACCEPT -A OUTPUT -o virbrDocker -p udp -m udp --dport 68 -j ACCEPT

The bridge virbrDocker can send packages anywhere (first line) and can receive packages back if the connections was previously established (second line)

-A FORWARD -d 192.168.123.0/24 -o virbrDocker -m conntrack --ctstate RELATED,ESTABLISHED -j ACCEPT -A FORWARD -s 192.168.123.0/24 -i virbrDocker -j ACCEPT

The bridges can send packages to themselves, otherwise everything is rejected that was sent to or form the bridges

-A FORWARD -i virbrDocker -o virbrDocker -j ACCEPT -A FORWARD -i virbr1 -o virbr1 -j ACCEPT #If not accepted above, we reject everything from the two bridges -A FORWARD -o virbrDocker -j REJECT --reject-with icmp-port-unreachable -A FORWARD -i virbrDocker -j REJECT --reject-with icmp-port-unreachable -A FORWARD -o virbr1 -j REJECT --reject-with icmp-port-unreachable -A FORWARD -i virbr1 -j REJECT --reject-with icmp-port-unreachable

The bridge virbrDocker can send packages to the outside world. (MASQUERADE is a special SNAT target, where the destination IP doesn't have to be specified. SNAT replaces the source IP address of the package with the public IP address of our system)

Last two lines: The bridge can't send anything to the multicast and to the broadcast addresses.

-A POSTROUTING -s 192.168.123.0/24 ! -d 192.168.123.0/24 -p tcp -j MASQUERADE --to-ports 1024-65535 -A POSTROUTING -s 192.168.123.0/24 ! -d 192.168.123.0/24 -p udp -j MASQUERADE --to-ports 1024-65535 -A POSTROUTING -s 192.168.123.0/24 ! -d 192.168.123.0/24 -j MASQUERADE -A POSTROUTING -s 192.168.123.0/24 -d 224.0.0.0/24 -j RETURN -A POSTROUTING -s 192.168.123.0/24 -d 255.255.255.255/32 -j RETURN

Manage machines

List/Inspect

Whit the ls subcommand we can list all the docker-machine managed hosts.

# docker-machine ls NAME ACTIVE DRIVER STATE URL SWARM DOCKER ERRORS manager - kvm Running tcp://192.168.42.118:2376 v18.05.0-ce

- NAME: name of the created machine

- ACTIVE: from the Docker client point of view, the active virtual host can be managed with the docker and with the docker-compose commands form the local host, as we would executed these commands on the remote virtual host. There can be always a single active machine that is marked with an asterisk '*' in the ls output.

- DRIVER:

- STATE:

- URL: The IP address of the virtual host.

- SWARM:

With the inspect <machine name> subcommand we can get very detailed information about a specific machine:

# docker-machine inspect manager

{

"ConfigVersion": 3,

"Driver": {

"IPAddress": "",

"MachineName": "manager",

"SSHUser": "docker",

"SSHPort": 22,

"SSHKeyPath": "",

"StorePath": "/root/.docker/machine",

"SwarmMaster": false,

"SwarmHost": "tcp://0.0.0.0:3376",

"SwarmDiscovery": "",

"Memory": 1024,

"DiskSize": 20000,

"CPU": 1,

"Network": "docker-network",

"PrivateNetwork": "docker-machines",

"ISO": "/root/.docker/machine/machines/manager/boot2docker.iso",

...

},

"DriverName": "kvm",

"HostOptions": {

...

...

"Name": "manager"

}

Set Active machine

With our local docker client we can connect to the docker daemon of any of the virtual hosts. That virtual host that we can managed locally is called "active" host. From Docker client point of view, the active virtual host can be managed with the docker and with the docker-compose commands form the local host, as we executed these commands on the (remote) virtual host. We can make any docker-machine managed virtual host active with the 'docker-machine env <machine name>' command. Docker gets connection information from environment variables. With this command we can redirect our docker CLI. Run this command in the host.

# docker-machine env manager export DOCKER_TLS_VERIFY="1" export DOCKER_HOST="tcp://192.168.42.118:2376" export DOCKER_CERT_PATH="/root/.docker/machine/machines/manager" export DOCKER_MACHINE_NAME="manager" # Run this command to configure your shell: # eval $(docker-machine env manager)

As the output of the env command suggests, you have to run the eval command in that shell that you want to use to managed the active virtual host.

# eval $(docker-machine env manager)

Now, in the same shell, run the ls command again. The machine 'manager' will be marked with the asterisk in the ACTIVE column.

# docker-machine ls NAME ACTIVE DRIVER STATE URL SWARM DOCKER ERRORS manager * kvm Running tcp://192.168.42.118:2376 v18.05.0-ce

In the same shell on the host machine, create a docker container:

# docker run -d -i -t --name container1 ubuntu /bin/bash 9dfda56f7739831b0d19c8acd95748b1c93f6c6bb82d2aa87cfb10ecee0e4f28

Now we will log on to the virtual host with the ssh <machine name> command.

# docker-machine ssh manager

## .

## ## ## ==

## ## ## ## ## ===

/"""""""""""""""""\___/ ===

~~~ {~~ ~~~~ ~~~ ~~~~ ~~~ ~ / ===- ~~~

\______ o __/

\ \ __/

\____\_______/

_ _ ____ _ _

| |__ ___ ___ | |_|___ \ __| | ___ ___| | _____ _ __

| '_ \ / _ \ / _ \| __| __) / _` |/ _ \ / __| |/ / _ \ '__|

| |_) | (_) | (_) | |_ / __/ (_| | (_) | (__| < __/ |

|_.__/ \___/ \___/ \__|_____\__,_|\___/ \___|_|\_\___|_|

Boot2Docker version 18.05.0-ce, build HEAD : b5d6989 - Thu May 10 16:35:28 UTC 2018

Docker version 18.05.0-ce, build f150324

docker@manager:~$

List the available docker containers. We should see there the newly created container1. The docker run command was executed in the host, bat was run in the remote, virtual host.

docker@manager:~$ docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 9dfda56f7739 ubuntu "/bin/bash" 5 minutes ago Up 5 minutes container2

Running the docker ps command on the host, should give the same result.

Unset active machine

The active machine can be unset with the "--unset" switch. Once the active docker machine was unset, the docker client will manage the local docker daemon again.

# docker-machine env --unset unset DOCKER_TLS_VERIFY unset DOCKER_HOST unset DOCKER_CERT_PATH unset DOCKER_MACHINE_NAME # Run this command to configure your shell: # eval $(docker-machine env --unset)

As the output suggest, we have to run the eval command again with the --unset switch to clear the shell. Alternatively you can just start a new shell.

# eval $(docker-machine env --unset)

Now lets run the ps command again. As the docker client now connected to the local docker daemon, we shouldn't see container1 anymore in the list.

# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 66e9cfbbc947 busybox "sh" 30 hours ago Up 4 minutes critcon

Services

Introduction

In a distributed application, different pieces of the app are called “services.” Services are really just “containers in production.” A service only runs one image, but it codifies the way that image runs—what ports it should use, how many replicas of the container should run so the service has the capacity it needs, and so on. Scaling a service changes the number of container instances running that piece of software, assigning more computing resources to the service in the process.

Luckily it’s very easy to define, run, and scale services with the Docker platform -- just write a docker-compose.yml file.

Source: https://docs.docker.com/get-started/part3/#prerequisites

YAMEL

YAML /'jæm.ḷ/ is a human-readable data serialization language. It is commonly used for configuration files, but could be used in many applications where data is being stored (e.g. debugging output) or transmitted (e.g. document headers). YAML targets many of the same communications applications as XML but has a minimal syntax which intentionally breaks compatibility with SGML [1]. It uses both Python-style indentation to indicate nesting, and a more compact format that uses [] for lists and {} for maps making YAML 1.2 a superset of JSON. Custom data types are allowed, but YAML natively encodes scalars (such as strings, integers, and floats), lists, and associative arrays (also known as hashes, maps, or dictionaries).

Docker Composition

Introduction

Compose is a tool for defining and running multi-container Docker applications. With Compose, you use a YAML file to configure your application’s services. Then, with a single command, you build, create containers and start all the services from your configuration with a single command.

Multiple isolated environments on a single host

Compose uses a project name to isolate environments from each other. You can make use of this project name in several different contexts:

- on a dev host, to create multiple copies of a single environment, such as when you want to run a stable copy for each feature branch of a project

- on a CI server, to keep builds from interfering with each other, you can set the project name to a unique build number

Development environments

When you’re developing software, the ability to run an application in an isolated environment and interact with it is crucial. The Compose command line tool can be used to create the environment and interact with it.

- The Compose file provides a way to document and configure all of the application’s service dependencies (databases, queues, caches, web service APIs, etc). Using the Compose command line tool you can create and start one or more containers for each dependency with a single command (docker-compose up).

- Together, these features provide a convenient way for developers to get started on a project. Compose can reduce a multi-page “developer getting started guide” to a single machine readable Compose file and a few commands.

Automated testing environments

An important part of any Continuous Deployment or Continuous Integration process is the automated test suite. Automated end-to-end testing requires an environment in which to run tests. Compose provides a convenient way to create and destroy isolated testing environments for your test suite. By defining the full environment in a Compose file, you can create and destroy these environments in just a few commands:

Source: https://docs.docker.com/compose/overview/

docker compose vs docker stack (swarm)

In recent releases, a few things have happened in the Docker world. Swarm mode got integrated into the Docker Engine in 1.12, and has brought with it several new tools. Among others, it’s possible to make use of docker-compose.yml files to bring up stacks of Docker containers, without having to install Docker Compose.

The command is called docker stack, and it looks exactly the same to docker-compose. Both docker-compose and the new docker stack commands can be used with docker-compose.yml files which are written according to the specification of version 3. For your version 2 reliant projects, you’ll have to continue using docker-compose. If you want to upgrade, it’s not a lot of work though.

As docker stack does everything docker compose does, it’s a safe bet that docker stack will prevail. This means that docker-compose will probably be deprecated and won’t be supported eventually.

However, switching your workflows to using docker stack is neither hard nor much overhead for most users. You can do it while upgrading your docker compose files from version 2 to 3 with comparably low effort.

If you’re new to the Docker world, or are choosing the technology to use for a new project - by all means, stick to using docker stack deploy.

Source: https://vsupalov.com/difference-docker-compose-and-docker-stack/

Install

docker-compose is not part of the standard docker installation. We have to install it form github.

sudo curl -L https://github.com/docker/compose/releases/download/1.21.2/docker-compose-$(uname -s)-$(uname -m) -o /usr/local/bin/docker-compose sudo chmod +x /usr/local/bin/docker-compose

# docker-compose --version docker-compose version 1.21.2, build a133471

How to use docker-compose

I will demonstrate the potential in docker-compose through a simple example. We are going to build a WordPress service that requires two containers. On for the mysql database and one for the WordPress itself. To make the example a little bit more complicated, we won't simple download the WordPress image from DockerHub, we are going to build it, using the wordPress image as the base image of our newly built image. So with a simple docker-compose.yml file we can build as many images as we want and we can construct containers from them in the given order. Isn't it huge?

$ mkdir wp-example $ cd wp-example $ mkdir wordpress $ touch docker-compose.yml

[wp-example]# ll total 8 -rw-r--r-- 1 root root 148 Jun 23 23:09 docker-compose.yml drwxr-xr-x 2 root root 4096 Jun 23 22:58 wordpress

$ cd wordpress $ touch Dockerfile $ touch example.html

[wordpress]# ll total 8 -rw-r--r-- 1 root root 159 Jun 23 22:58 Dockerfile -rw-r--r-- 1 root root 18 Jun 23 22:44 example.html

FROM wordpress:latest

COPY ["./example.html","/var/www/html/example.html"]

VOLUME /var/www/html

ENTRYPOINT ["docker-entrypoint.sh"]

CMD ["apache2-foreground"]

docker-compose.yml

version: '3'

services:

wordpress:

container_name: my-worldrpress-container

image: myWordPress:6.0

build: ./wordpress

links:

- db:mysql

ports:

- 8080:80

db:

image: mariadb

environment:

MYSQL_ROOT_PASSWORD: example

Note

You can use only space to make indention. Tab is not supported

[wp-example]# docker-compose up -d Building wordpress Step 1/5 : FROM wordpress:latest ---> 7801d36d734c Step 2/5 : COPY ./example.html /var/www/html/example.html ---> ab67aee3c270 Removing intermediate container a0894a2e834f Step 3/5 : VOLUME /var/www/html ---> Running in 470025d9c877 ---> 9890d3cd9f0a Removing intermediate container 470025d9c877 Step 4/5 : ENTRYPOINT docker-entrypoint.sh ---> Running in 09548484b9b2 ---> 555754d6a3a7 Removing intermediate container 09548484b9b2 Step 5/5 : CMD apache2-foreground ---> Running in 035fcfc0876d ---> 076e75c72b58 Removing intermediate container 035fcfc0876d Successfully built 076e75c72b58 Successfully tagged wp2-example_wordpress:latest WARNING: Image for service wordpress was built because it did not already exist. To rebuild this image you must use `docker-compose build` or `docker-compose up --build`. Creating wp2-example_db_1 ... done Creating wp2-example_wordpress_1 ... done Attaching to wp2-example_db_1, wp2-example_wordpress_1

[wp-example]# docker-compose ps

Name Command State Ports

---------------------------------------------------------------------------------------

wp2-example_db_1 docker-entrypoint.sh mysqld Up 3306/tcp

wp2-example_wordpress_1 docker-entrypoint.sh apach ... Up 0.0.0.0:8080->80/tcp

# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 8ec2920234b6 wp2-example_wordpress "docker-entrypoint..." About a minute ago Up About a minute 0.0.0.0:8080->80/tcp wp2-example_wordpress_1 786fb7da1ca7 mariadb "docker-entrypoint..." About a minute ago Up About a minute 3306/tcp wp2-example_db_1

docker-compose.yml syntax

There are three major versions of compose file format.

compose only

- build: Configuration options that are applied at build time

- The object form is allowed in Version 2 and up.

- In version 1, using build together with image is not allowed. Attempting to do so results in an error.

- From version 3, if you specify image as well as build, then Compose names the built image with the webapp and optional tag specified in image

- Note: This option is ignored when deploying a stack in swarm mode with a (version 3) Compose file. The docker stack command accepts only pre-built images.

- dockerfile: Dockerfile-alternate

- CONTEXT: Either a path to a directory containing a Dockerfile, or a url to a git repository.

- TARGET: Build the specified stage as defined inside the Dockerfile (added in 3.4)

version: '3'

services:

webapp:

build:

context: ./dir

dockerfile: Dockerfile-alternate

target: prod

- container_name: define the name of the container that is created for the service.

Note

- If the build and the image is both provided, the image will be created with the name given in the image parameter.

- If the image tag is not provided, the default name of the new image is: </directory name>_<service_name>

- If the container_name is not provided, the container default name is: </directory name>_<service_name>

- container_name: Specify a custom container name, rather than a generated default name

- external_links: Link to containers started outside this docker-compose.yml or even outside of Compose, especially for containers that provide shared or common services. external_links follow semantics similar to links when specifying both the container name and the link alias (CONTAINER:ALIAS).

external_links: - redis_1 - project_db_1:mysql - project_db_1:postgresql

- network_mode: Network mode. Use the same values as the docker client --network parameter, plus the special form service:[service name].

network_mode: "bridge" network_mode: "host" network_mode: "none" network_mode: "service:[service name]" network_mode: "container:[container name/id]"

swarm only

- deploy: This only takes effect when deploying to a swarm with docker stack deploy, and is ignored by docker-compose up and docker-compose run.

- ENDPOINT_MODE:

- vip: (default): Single Virtual IP for the service. Swarm is load balancing

- dnsrr: (DNS round rubin): DNS query gives the list of the nodes for our own load balancing.

- MODE: Either global (exactly one container per swarm node) or replicated (a specified number of containers)

- PLACEMENT:

- REPLICAS: If the service is replicated (which is the default), specify the number of containers that should be running at any given time.

- RESOURCES

- RESTART_POLICY:

- ENDPOINT_MODE:

version: '3'

services:

redis:

image: redis:alpine

deploy:

replicas: 6

update_config:

parallelism: 2

delay: 10s

restart_policy:

condition: on-failure

resources:

limits:

cpus: '0.50'

memory: 50M

reservations:

cpus: '0.25'

memory: 20M

restart_policy:

condition: on-failure

delay: 5s

max_attempts: 3

window: 120s

compose && swarm common part

- command: Override the default command.

command: ["bundle", "exec", "thin", "-p", "3000"]

- ports: specify the host:container port mapping like with the -p switch in the run command.

ports: - "3000" - "3000-3005" - "8000:8000" - "9090-9091:8080-8081" - "49100:22" - "127.0.0.1:8001:8001" - "127.0.0.1:5000-5010:5000-5010" - "6060:6060/udp"

- dns: Custom DNS servers. Can be a single value or a list.

dns: 8.8.8.8 dns: - 8.8.8.8 - 9.9.9.9

- dns_search: Custom DNS search domains. Can be a single value or a list.

dns_search: example.com dns_search: - dc1.example.com - dc2.example.com

- entrypoint: Override the default entrypoint.

entrypoint: /code/entrypoint.sh

or as a list:

entrypoint:

- php

- -d

Note: Setting entrypoint both overrides any default entrypoint set on the service’s image with the ENTRYPOINT Dockerfile instruction, and clears out any default command on the image - meaning that if there’s a CMD instruction in the Dockerfile, it is ignored.

- env_file: Add environment variables from a file. Can be a single value or a list.

env_file: - ./common.env - ./apps/web.env

- environment: Add environment variables

environment: - RACK_ENV=development - SHOW=true

- expose: Expose ports without publishing them to the host machine - they’ll only be accessible to linked services. Only the internal port can be specified.

expose: - "3000" - "8000"

- extends: Extend another service, in the current file or another, optionally overriding configuration.

extends: file: common.yml <<from this file service: webapp <<extend this service

- extra_hosts: Add hostname mappings. Use the same values as the docker client --add-host parameter.

extra_hosts: - "somehost:162.242.195.82" - "otherhost:50.31.209.229"

- image: Specify the image to start the container from. Can either be a repository/tag or a partial image ID.If the image does not exist, Compose attempts to pull it

Note: In the version 1 file format, using build together with image is not allowed. Attempting to do so results in an error.

- labels: Add metadata to containers using Docker labels. You can use either an array or a dictionary.

labels: - "com.example.description=Accounting webapp" - "com.example.department=Finance"

- links: Link to containers in another service. Either specify both the service name and a link alias (SERVICE:ALIAS), or just the service name.

Links are a legacy option. We recommend using networks instead.

web: links: - db - db:database - redis

Containers for the linked service are reachable at a hostname identical to the alias, or the service name if no alias was specified. Links also express dependency between services in the same way as depends_on, so they determine the order of service startup. Note: If you define both links and networks, services with links between them must share at least one network in common in order to communicate.

- networks: Networks to join. The container created from this service will be connected to the given network(s). (web to new, worker to legacy)

services:

web:

build: ./web

networks:

- new

worker:

build: ./worker

networks:

- legacy

- volumes: specify the container volume:host dir mappaing like with the -v option in the run command. Long and short version:

version: "3.2"

services:

web:

image: nginx:alpine

volumes:

- type: volume

source: mydata

target: /data

volume:

nocopy: true

- type: bind

source: ./static

target: /opt/app/static

db:

image: postgres:latest

volumes:

- "/var/run/postgres/postgres.sock:/var/run/postgres/postgres.sock"

- "dbdata:/var/lib/postgresql/data"

Good to know

You can control the order of service startup with the depends_on option. Compose always starts containers in dependency order, where dependencies are determined by depends_on, links, volumes_from, and network_mode: "service:...". However, Compose does not wait until a container is “ready” (whatever that means for your particular application) - only until it’s running. There’s a good reason for this.

SWARM

Introduction

A swarm is a group of machines that are running Docker and joined into a cluster. After that has happened, you continue to run the Docker commands you’re used to, but now they are executed on a cluster by a swarm manager. The machines in a swarm can be physical or virtual. After joining a swarm, they are referred to as nodes.

Source: https://docs.docker.com/get-started/part4/#introduction

docker@manager:~$ docker node ls ID HOSTNAME STATUS AVAILABILITY MANAGER STATUS ENGINE VERSION uzhpeg3ug0ip6f9o3l51c749y * manager Ready Active Leader 18.05.0-ce kstfblenhtb0dbpzkxo2olssn worker1 Ready Active 18.05.0-ce pf8jdfmlegbx7dogi6jnbxu2y worker2 Ready Active 18.05.0-ce

https://docs.docker.com/engine/swarm/swarm-tutorial/deploy-service/

Create swarm

docker@manager:~$ docker swarm init --advertise-addr 192.168.42.118

Swarm initialized: current node (uzhpeg3ug0ip6f9o3l51c749y) is now a manager.

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-21qaw0xpv9tj2kjxbmj6jn2jqpbcjjkyeze2nno9yy0wvt0vp9-9k2yyit1x8a11ca50ejxalzhz 192.168.42.118:2377

To add a manager to this swarm, run 'docker swarm join-token manager' and follow the instructions.